Virtual Reality Volume Rendering for real-time Visualization of Radiologic Anatomy

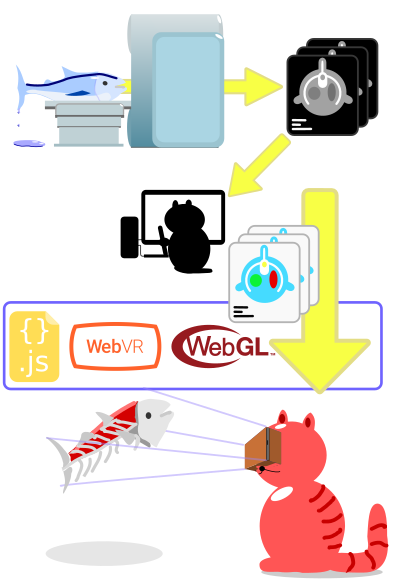

The 3D radiologic volume data (MRI & CT) at sectional-anatomy.org is visualized on a 2D computer screen. Virtual reality (VR) promises to improve the visualization and thus understanding of this 3D data by allowing it to be presented in a natural, depth information preserving way.

A new Web technology (WebVR) in combination with powerful smartphone graphic processors and inexpensive virtual reality viewers (Google Cardboard) have made virtual reality cheap and easily obtainable.

These new developments and the before mentioned possibility of a more natural visualization have lead me to implement an experimental VR webpage for an MRI scan.

Try It

Try It

You need an Android phone with the Google Chrome browser and a virtual reality viewer (Google Cardboard) to explore the MRA Brain in VR.

Available Visualizations and Sequences

At time of writing only Google Chrome for Android has native WebVR support. See webvr.info for updated information on WebVR compatibility.

User Experience

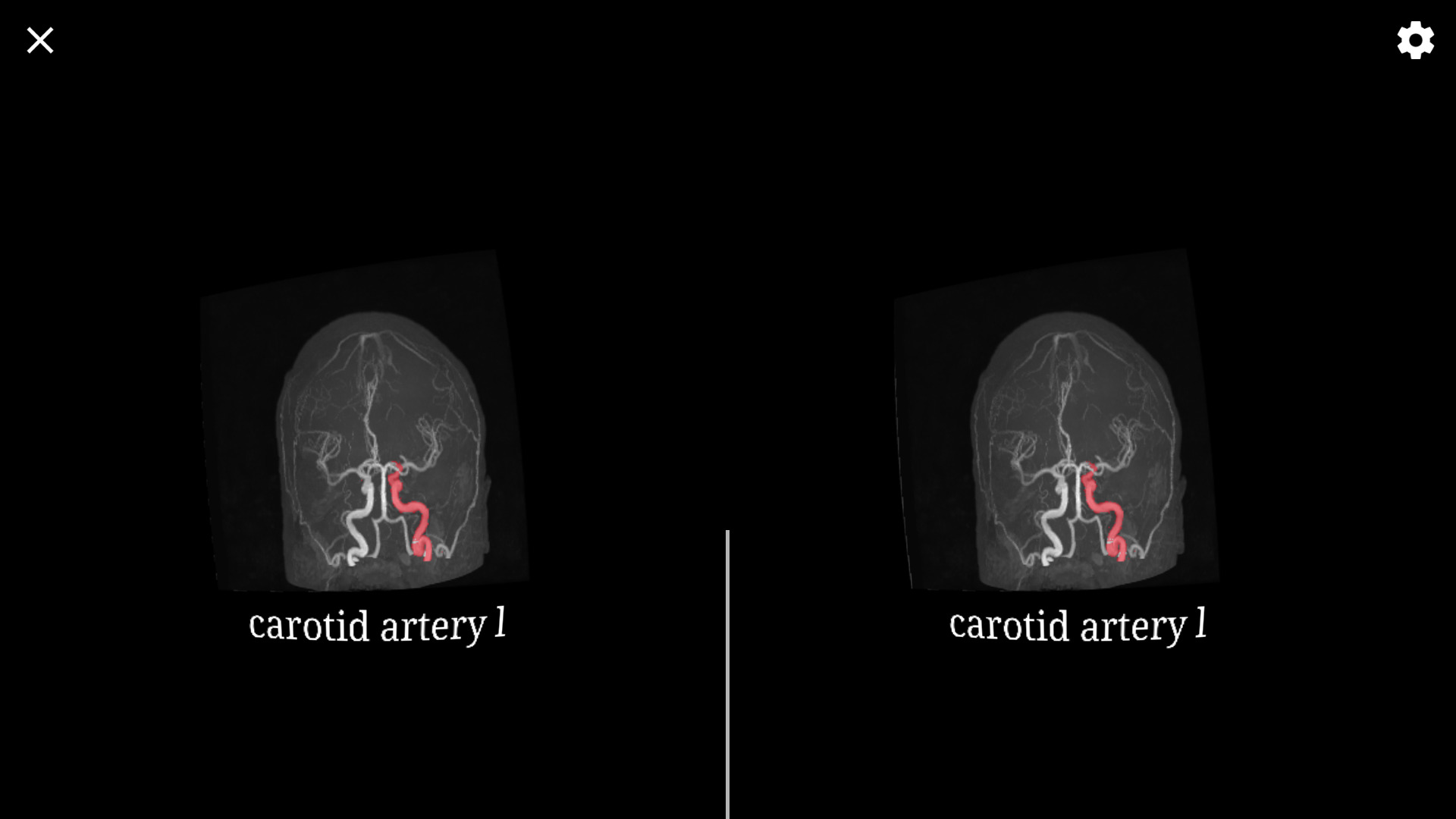

Virtual reality uses a different image for each eye, allowing depth to be perceived by stereoscopic vision. Likewise different from a computer screen, the VR viewer covers the users field of view and the head movement is tracked.

Depth Information

It is my experience that the depth perception allows the spacial extent of the data to be better discerned.

Contrary to my expectation a MIP rendering works well. This is surprising since it does not represent a reality like rendering. Most obviously voxels may appear in front when they are in fact behind the other ones, since their order along a view ray is not considered.

Still, a volume can not be perceived in its entirety. The stereoscopic rendering at best doubles the number of channels (from RGB to 2xRGB), but does not offer an additional dimension to the two of the screen. Therefore, surfaces, since requiring only a single additional depth value per pixel, can be quite well visualized. But to get all the information from a volume the individual slices are still needed.

Rotating the Volume

Since Cardboard devices only support tracking of the viewing direction (angles) but not the position of the head (xyz), the user can not walk around the volume in VR. A different approach was needed. Like in the Google Cardboard Demo: Exhibit the volume is always kept centered in the users field of view and rotated to reflect the head rotation.

Selecting Anatomy

Querying the volume and selecting an anatomy from the segmentation to get its label is achieved with a reticle.

The anatomy behind the reticle, that contributes most to the value of the center pixel, is selected. The selection process can be toggled with a click (the Cardboard button).

Only two degrees of freedom from the head rotation are used to position the reticle. Or more precisely: the reticle is fixed and the head rotation is used to rotate the volume somewhat unintuitively. This makes selecting a specific anatomy difficulty or even impossible.

Implementation Details

The existing volume renderer for WebGL was adapted to support the new WebVR standard to allow virtual reality rendering in the browser.

Like for most VR renderers a forward renderer is used. The difference being, that this is normally done to be able to use MSAA (see Optimizing the Unreal Engine 4 Renderer for VR, Pete Demoreuille); but this is of no use for volume rendering. Instead, the ability of the existing WebGL renderer to output an image of the volume and its segmentation simultaneously is not needed. On the contrary, they need to be combined in one image. Thus, the renderer was adapted to a single stage. As a side effect, color renderings are now possible without using the WEBGL_draw_buffers extension.

Since multiple layers are not supported by WebVR implementations, some additional overlay renderers are injected before or after the volume rendering.

Please note that, due to its experimental nature, the source code is sometimes confusing or even confused.

Anti-Aliasing

Anti-Aliasing is recommended for VR due to the large apparent pixel size. Instead of the not applicable MSAA, a full screen antialiasing is used. Some aliasing is also caused by the insufficient linear interpolation of the volume voxels (see: An Evaluation of Reconstruction Filters for Volume Rendering Stephen R. Marschner and Richard J. Lobb); or even the nearest neighbor interpolation of the segmentation.

Dynamic Resolution

The WebVR layers API would easily allow a dynamic resolution (like recommended in: Alex Vlachos, Advanced VR Rendering Performance GDC 2016) to prevent dropping frames. The inverted rotation and constant centering of the volume would lead to discrepancies with Reprojection / Timewarp in the case of a dropped frame. Therefore, it is important not to drop frames and keep the frame rate. But there is no way to get the target frame rate and furthermore the needed WebGL EXT_disjoint_timer_query extension is not widely supported (i.e. not on my phone). Thus, this is not implemented.

Discussion

Virtual reality can improve the understanding of a radiologic volume data, but looking at the slices remains necessary to get all the information. Due to the high amount of information in the volume data, a restriction might prove advantageous, insofar as it includes the significant information.

A next step is to integrate the WebVR renderer in the sectional-anatomy.org viewer. Furthermore, support for room scale VR with tracked hand controllers can be considered, but the implementation would require considerable work.